Motivation

Before this project, I joined the 10th student robot team of Xi’an Jiaotong University for ABU Pacific-Asia Robot Contest 2011, mainly focused on designing detection algorithms for automatic robot II. During that period, we combined video camera and laser radar to construct a 3D robot vision system. When Kinect was released by Microsoft in the late 2010, I believed this wonderful technology would be very successful in robotics as a key sensor. In April 2011, The Official Kinect’s SDK was released and then MSRA chose “Kinect” as its annual “Microsoft Student Challenge” theme in September 2011, I invited other members to take part in this contest, to make a robot based on this new device.

Description

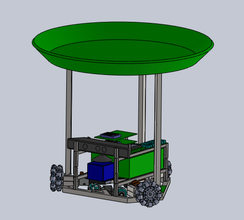

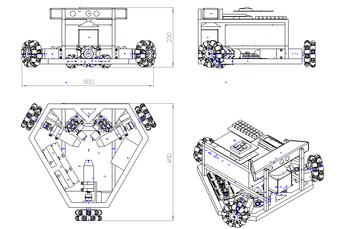

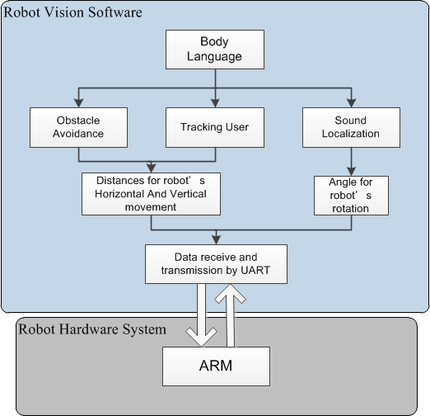

Our robot's controller is the user’s body

language. Here we defined 5 type of body commands for one user to control the robot. The robot

will detect user's skeleton via Kinect and then execute different missions according to the corresponding user's command.

The demo will be very helpful for you to understand how our robot works.

The demo will be very helpful for you to understand how our robot works.

DEMO

Our Project's MSRA Official Video |

Microsoft Student Challenge 2012 Final Demo |